Acer Aspire 16 AI

The Acer Aspire 16 AI is the best budget Copilot+ PC — Snapdragon X X1-26-100, 45 TOPS NPU, 16GB LPDDR5X, 16" WUXGA 120Hz touch, Wi-Fi 7, at under $700. Most affordable on-device AI laptop available.

All Reviews

39 products reviewed for LLM inference & Stable Diffusion

39

Total Reviews

16 GB

Max VRAM tracked

70B

Largest model run

The Acer Aspire 16 AI is the best budget Copilot+ PC — Snapdragon X X1-26-100, 45 TOPS NPU, 16GB LPDDR5X, 16" WUXGA 120Hz touch, Wi-Fi 7, at under $700. Most affordable on-device AI laptop available.

The Anker 777 is the best Thunderbolt 4 dock for Windows mini PC users — 12 ports, 90W charging, triple 4K display support, and Ethernet in a compact form factor at $199.

The APC BX1500M is a 1500VA/900W UPS with Automatic Voltage Regulation and 10 outlets. For AI workstation builders, it is the only way to protect hours of model fine-tuning or dataset processing from a power outage — providing enough battery runtime to save work and shut down gracefully.

The Apple Mac Mini M4 Pro is the best compact AI workstation for local LLM inference in 2026. With up to 64GB of unified memory accessible at 273GB/s and a 14-core CPU, it can run 70B parameter models quantized to 4-bit with no external GPU required.

The Apple Mac Mini M4 is the most affordable path to Apple Silicon AI inference in 2026. With 16GB of unified memory at 120 GB/s bandwidth and a 10-core CPU, it runs 7B models at 40–60 tokens/second via Ollama — faster than any competing mini PC at the same price.

The ASUS Dual RTX 5060 Ti 16GB brings Blackwell architecture and 16GB GDDR7 to the entry-level discrete GPU tier — the only way to get 16GB VRAM at this price point in 2026. For local AI builders who need 13B model headroom on a tight budget, it runs SDXL and quantized LLMs fully in VRAM while staying under 180W.

The ASUS Prime RTX 5070 SFF-Ready is a 2.5-slot Blackwell GPU built for compact Mini-ITX builds. With 12GB GDDR7 at 672 GB/s and triple Axial-tech fans with a phase-change thermal pad, it delivers full RTX 5070 performance in the smallest possible footprint — ideal for building a custom compact AI workstation.

The ASUS Zenbook S14 is the best Intel Copilot+ laptop — Intel Core Ultra 7 Series 2, 47 TOPS NPU, 32GB RAM, 3K OLED 120Hz touch display, and Thunderbolt 4. Best-in-class display with the most RAM of any NPU laptop in this roundup.

The be quiet! Pure Power 13 M 1000W is a fully modular 80+ Gold ATX 3.1 PSU built for high-TDP GPU AI workstations — with native PCIe 5.1 connector support for RTX 5080 and 4090 builds, a semi-passive 120mm fan for silent idle, and enough headroom for sustained full-load AI inference.

The Cable Matters Thunderbolt 5 cable delivers 80Gbps bidirectional data and up to 120Gbps video bandwidth — Intel Certified for full TB5 compliance. Essential for Mac Mini M4 Pro users connecting external NVMe drives or high-bandwidth displays without bottlenecking data pipelines.

The CalDigit TS4 is the gold standard Thunderbolt 4 dock for Mac Mini M4 Pro and AI workstations — 18 ports including 2.5GbE, 98W charging, and support for single 8K or dual 6K displays over a single cable.

The GEEKOM A6 packs an AMD Ryzen 7 6800H, 32GB DDR5, and a USB4 port into a compact aluminium chassis — making it the best x86 mini PC for running 14B–32B LLMs via CPU and the only budget mini PC ready for an external GPU upgrade.

The GEEKOM AI A7 MAX pairs AMD's fastest 8-core Zen 4 laptop chip with Radeon 780M RDNA 3 graphics in a compact mini PC chassis — delivering the best CPU inference speed in the sub-$400 AMD mini PC tier. With 1TB NVMe storage and USB4, it doubles as an always-on home AI server and handles 7B models smoothly via Ollama on Windows.

The GEEKOM IT12 is a business-grade mini PC with Intel Core i5-12450H and Intel Iris Xe Graphics, running local 7B–13B LLMs via Ollama. With 16GB DDR4, a 3-year warranty, and WiFi 6E, it is one of the best-built compact AI inference machines under $400 in 2026.

The GIGABYTE RTX 5070 WINDFORCE OC 12G brings NVIDIA Blackwell architecture and 5th-Gen Tensor Cores to the mid-range market. With 12GB GDDR7 at 672 GB/s, it excels at Stable Diffusion, ComfyUI, and quantized 7B–13B LLM inference — cooled by GIGABYTE's server-grade thermal gel WINDFORCE system.

The GIGABYTE RX 9060 XT GAMING OC 16G is the VRAM champion at its price tier. Powered by AMD RDNA 4 with 16GB GDDR6, it runs 13B+ LLMs comfortably where 12GB NVIDIA cards hit their ceiling. The WINDFORCE cooling with graphene nano lubricant handles sustained AI workloads — if you can navigate AMD's ROCm ecosystem.

The GMKtec M6 Ultra pairs AMD's Ryzen 7 7640HS (Zen 4, Phoenix) with 32GB DDR5 and a Radeon 780M RDNA 3 iGPU — making it the fastest AMD iGPU mini PC for local AI in 2026. Triple 4K display output, USB4, dual 2.5GbE, and a compact footprint round out a capable always-on AI server at a fraction of Apple Silicon prices.

The GMKtec NucBox M5 Pro is the best budget entry point for local AI inference in 2026. Powered by an AMD Ryzen 9 processor with Radeon 780M integrated graphics, it runs 7B models via Ollama and supports Windows 11 with full CUDA-compatible tooling via ROCm.

Adjustable GPU sag bracket with magnetic base and non-slip sheet. Fits GPUs 74–120mm wide. Supports heavy triple-fan cards like the RTX 5070 and RX 9060 XT during sustained AI inference runs.

The KAMRUI Hyper H2 is the most powerful Intel mini PC in its price range, powered by the 10-core Intel Core 14450HX running up to 4.8GHz. With 16GB DDR4 and 512GB PCIe 4.0 storage, it handles 7B–13B local LLMs via Ollama and is one of the fastest CPU-only mini PCs for AI inference available in 2026.

The KAMRUI Pinova P1 is an affordable AMD mini PC for entry-level local AI inference. Powered by the Ryzen 4300U with 16GB DDR4 and 512GB SSD, it runs 7B LLMs via Ollama on Windows 11 Pro and supports triple 4K display output — in a compact black chassis with orange accents.

The KAMRUI Pinova P2 is a compact silver AMD mini PC for entry-level local AI inference. Powered by the Ryzen 4300U with 16GB LPDDR4 and 512GB SSD, it runs 7B LLMs via Ollama and supports triple 4K display output — all in a compact silver chassis at a budget-friendly price.

The Kensington SD5700T is a reliable hybrid Thunderbolt 4 dock supporting both Mac and Windows with dual 4K, 90W charging, and Kensington's enterprise-grade build quality at $169.

The Microsoft Surface Laptop 7 13.8" is the top-rated Copilot+ PC — Snapdragon X Elite, 45 TOPS NPU, 16GB LPDDR5X, 20-hour battery, and a brilliant 120Hz touch display. Runs Phi-3 and Mistral 7B locally without cloud.

The Microsoft Surface Laptop 7 15" brings the 45 TOPS Snapdragon X Elite NPU to a 15" PixelSense 120Hz touch display with 1TB storage — best large-screen Copilot+ PC for AI workflows.

The MINISFORUM N5 Air is the most powerful AI NAS available — 5-bay, AMD Ryzen 7, USB4, a PCIe x16 slot for eGPU, OCuLink for external GPU inference, and dual 10GbE/5GbE networking.

The MSI RTX 4070 Ti Super Ventus 3X OC pairs Ada Lovelace architecture with 16GB GDDR6X at 672 GB/s — the same memory bandwidth as the newer RTX 5070, but with 4GB more VRAM at a lower price point. For local AI builders who need 13B Q8 headroom without paying for Blackwell, this is the value pick in the 16GB GPU tier.

The MSI RTX 4090 is the previous-generation flagship — and still the most capable GPU for local LLM inference at 24GB GDDR6X and 1008 GB/s bandwidth. With 24GB VRAM, it runs 32B models at Q4 quantization fully in GPU memory, making it the only consumer GPU under $2000 with a clear path to larger models without CPU offloading.

The MSI RTX 5080 Gaming Trio OC is NVIDIA's near-flagship Blackwell GPU, delivering 960 GB/s GDDR7 bandwidth with 16GB VRAM — fast enough to run 13B models at full Q8 precision and generate FLUX.1 images in under 2 seconds. The TRI FROZR 4 cooler keeps thermals flat during sustained AI inference without the size or power draw of the RTX 5090.

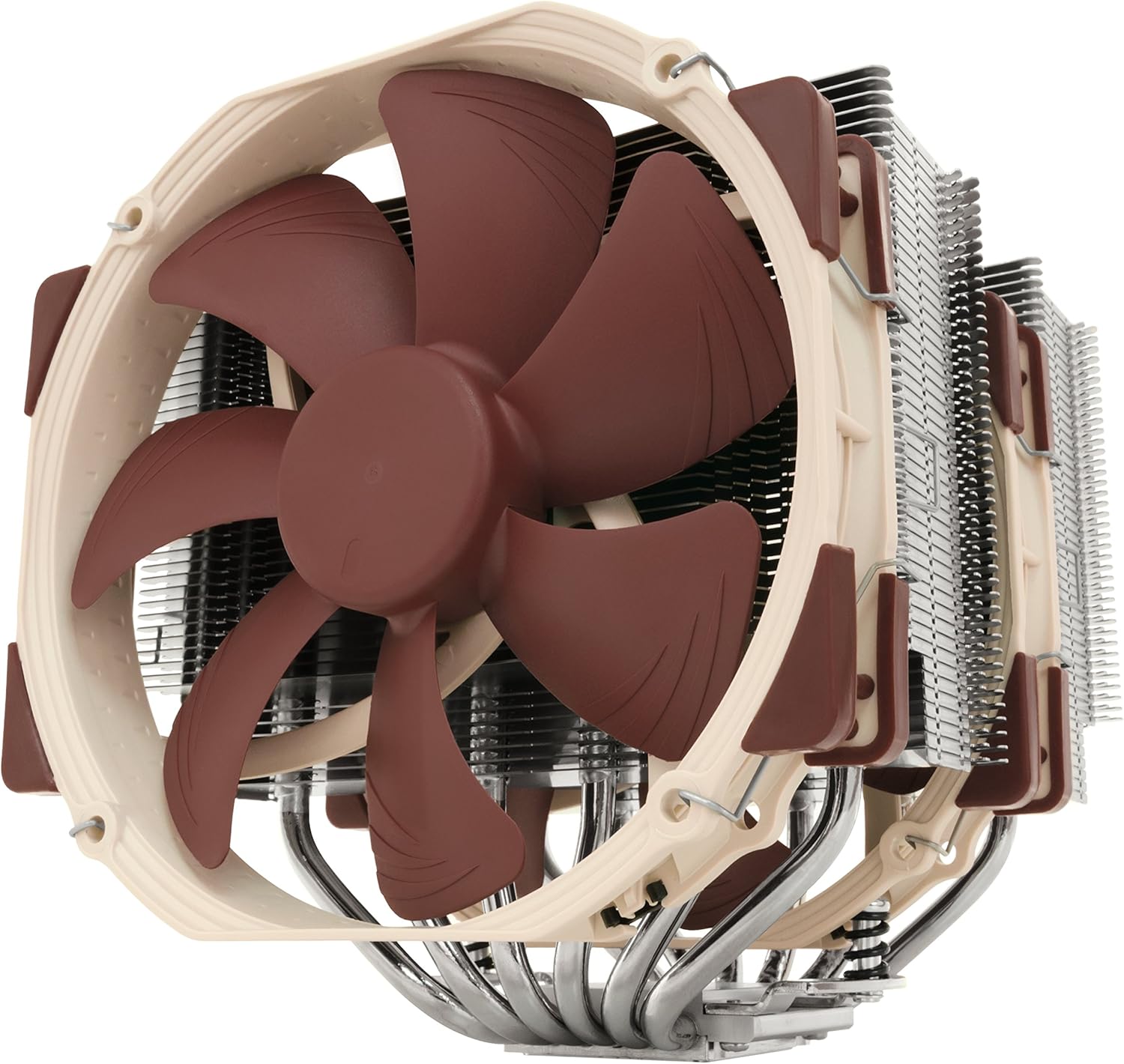

The Noctua NH-D15 is the benchmark air cooler for AI PC builders — its dual NF-A15 140mm fans and twin-tower heatsink dissipate 250W+ of sustained CPU load silently, making it the right choice for Ryzen 9 or Core i9 systems running continuous LLM inference where fan noise and cooling headroom matter.

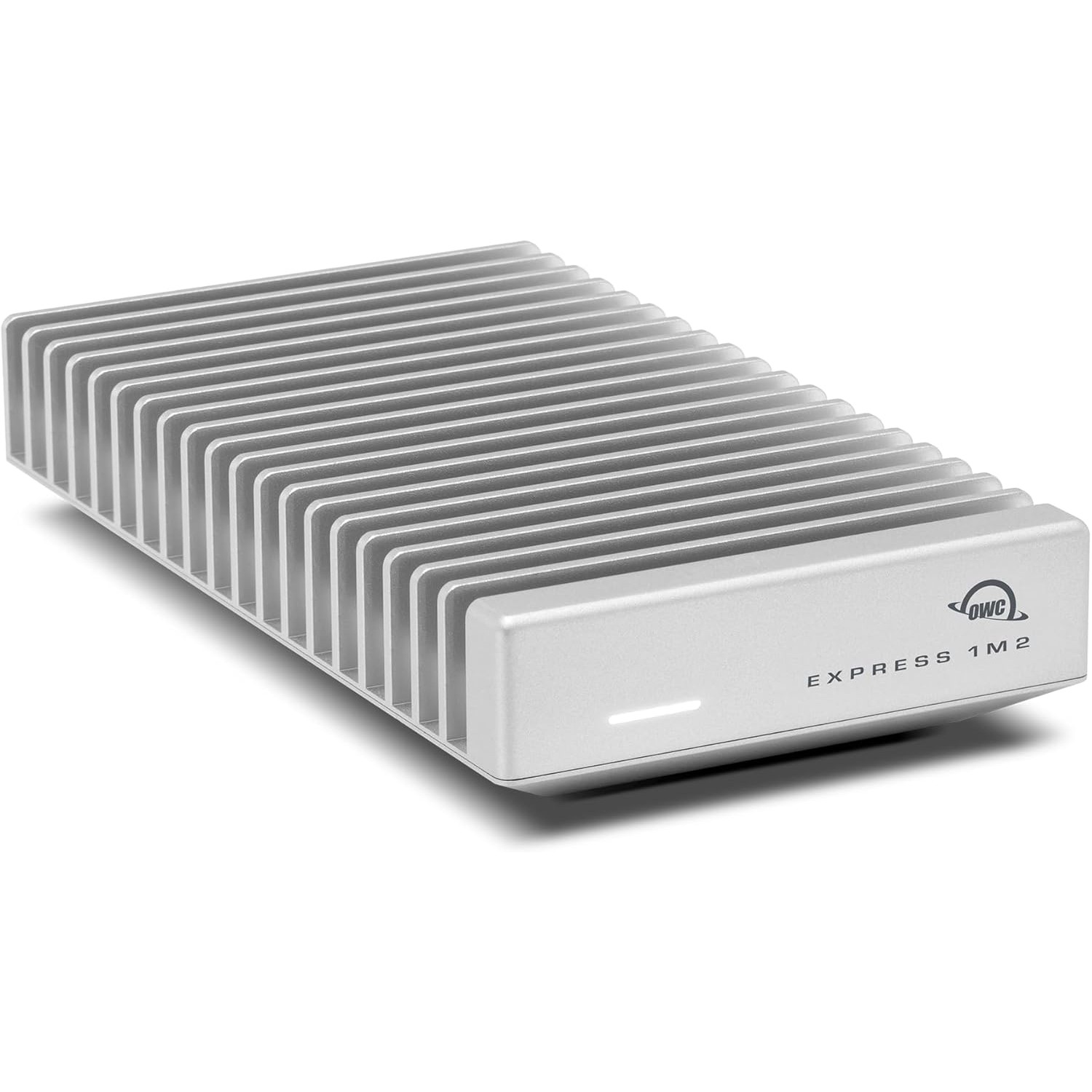

The OWC Envoy Express is a fanless Thunderbolt NVMe enclosure machined from aircraft-grade aluminum. At 40Gbps Thunderbolt bandwidth, it reads an internal NVMe at full speed externally — making it the right way to expand Mac Mini storage for large LLM libraries without sacrificing load times.

The Plugable TBT4-UD5 delivers 13 ports including 100W charging, dual 4K, 2.5GbE Ethernet, and 7 USB ports — the best TB4 dock for port density at $199.

The Samsung 990 PRO 4TB is the highest-capacity PCIe 4.0 NVMe SSD in Samsung's lineup — purpose-built for AI builders who store multiple large model families without constant weight management. At 7,450 MB/s sequential read, model loading times are negligible even for 70B quantized weights.

The Samsung Galaxy Book5 Pro 360 is the best 2-in-1 Copilot+ PC — Intel Core Ultra 7 256V, 47 TOPS NPU, 16" 3K AMOLED 120Hz touch, convertible form factor, and Windows 11 Pro for tablet-mode AI use.

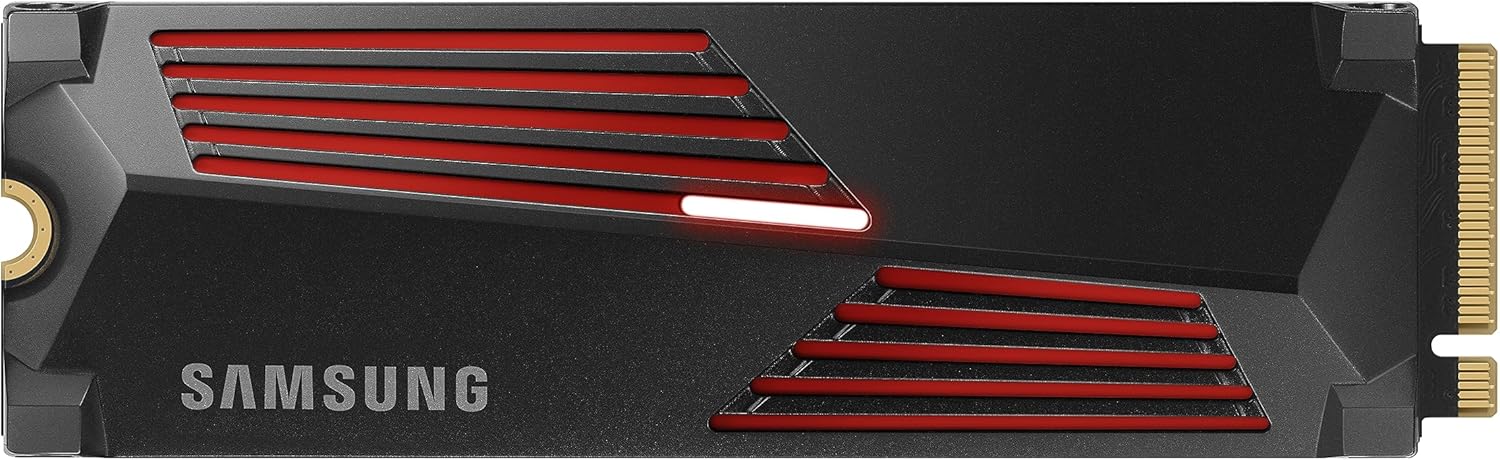

The Samsung 9100 PRO is the fastest consumer NVMe SSD available — 14,700 MB/s sequential read over PCIe 5.0 halves model loading times compared to PCIe 4.0 drives, and Samsung markets it explicitly for AI computing. For builders with a PCIe 5.0 M.2 slot, this is the correct storage foundation for a serious AI workstation.

The Silkland DisplayPort 2.1 VESA Certified DP80 cable delivers 80Gbps display bandwidth — required for 4K@240Hz, 4K@540Hz, or 8K output from RTX 5070 and RX 9060 XT GPUs. Older DisplayPort 1.4 cables physically cannot carry the output these Blackwell and RDNA 4 cards produce.

The Silkland USB4 / Thunderbolt 5 braided cable delivers 80Gbps data and 120Gbps display bandwidth with 240W charging — USB-IF Certified. The reinforced braided sleeve makes it the durable choice for users who move their setup frequently or need tight cable management.

The Synology DS925+ is the best home NAS for storing local AI model weights — 4-bay diskless, AMD Ryzen V1500B, dual 2.5GbE, and Synology's industry-leading DSM software. Ideal for housing 70B model files and AI datasets.

The UGREEN DXP4800 Plus is the best value 4-bay NAS for AI builders — 10GbE networking included at $350, Intel Pentium Gold 8505, 8GB DDR5, dual M.2 NVMe slots, and 4K HDMI output.