Apple Mac Mini (M4, 2024)

The Apple Mac Mini M4 is the most affordable path to Apple Silicon AI inference in 2026. With 16GB of unified memory at 120 GB/s bandwidth and a 10-core CPU, it runs 7B models at 40–60 tokens/second via Ollama — faster than any competing mini PC at the same price.

MEMORY

16 GB

BANDWIDTH

120 GB/s

TDP

20W

MAX MODEL

13B (Q4 quantized)

Running Llama 3.2 on the Mac Mini M4: Zero-Setup Local AI for $599

What Can You Run on This?

- Local LLM inference for 7B models (Llama 3, Phi-3, Gemma)

- Always-on home AI assistant server

- On-device coding companion (Continue.dev)

- Light Stable Diffusion inference (SD 1.5, SDXL slow)

Full Specifications

| Chip / Processor | Apple M4 |

|---|---|

| CPU Cores | 10 |

| GPU Cores | 10 |

| Unified Memory?Unified MemoryApple Silicon uses a single pool of fast RAM shared between CPU and GPU. Larger unified memory = larger models run entirely at full bandwidth — no PCIe bottleneck. | 16 GB |

| Memory Bandwidth?Memory BandwidthHow fast data moves between memory and the processor, measured in GB/s. Tokens per second scales nearly linearly with bandwidth — this is the single most important GPU spec for LLM speed. | 120 GB/s |

| Storage | 256 GB |

| TDP (Power Draw)?TDP (Power Draw)Thermal Design Power in watts — the maximum sustained power draw. Higher TDP generally means more performance but more heat and electricity cost. Important for 24/7 always-on setups. | 20W |

| Max LLM Size?Max LLM SizeThe largest language model this hardware can run with full GPU/unified-memory acceleration, at the specified quantization. Larger models require more memory. | 13B (Q4 quantized) |

| Form Factor | Mini PC |

| AI Performance Benchmarks | |

| Tokens Per Second (7B) | 42 t/s |

| Tokens Per Second (13B) | 22 t/s |

Pros & Cons

Pros

- Lowest cost Apple Silicon entry point for local AI

- 16GB unified memory is enough for all 7B and most 13B Q4 models

- 20W TDP — cheapest to run 24/7 of any AI-capable machine

- Silent — fanless under light-to-moderate AI workloads

- Native Ollama and llama.cpp Metal support

Cons

- 16GB ceiling limits 13B performance and rules out 34B+ models

- 120 GB/s bandwidth (vs 273 GB/s on M4 Pro) means noticeably slower 13B inference

- 10-core GPU less capable for Stable Diffusion vs M4 Pro

- Non-upgradeable memory — buy the right configuration upfront

Who Should NOT Buy This

Honest assessment

- Running 70B models — 16 GB unified memory limits you to 7B–13B range

- Stable Diffusion power users — image gen is usable but not fast

- Anyone who needs Windows — macOS only, no exceptions

- Heavy multi-task users — 16 GB fills up quickly with OS + model loaded simultaneously

Our Verdict

Apple Mac Mini (M4, 2024)

The Mac Mini M4 base model is the ideal entry-level AI machine for users who primarily run 7B models and want the Apple Silicon experience at the lowest cost. It runs Ollama out of the box, handles most popular LLMs, and consumes only 20W — making it practical to leave on 24/7 as a home AI server. If you anticipate running 13B+ models frequently, spend more and get the M4 Pro; otherwise, this is outstanding value.

Frequently Asked Questions

Q1What is the difference between the Mac Mini M4 and M4 Pro for AI?

The M4 Pro has 14 CPU cores (vs 10), 20 GPU cores (vs 10), 273 GB/s memory bandwidth (vs 120 GB/s), and scales to 64GB RAM (vs 32GB max). In practice, the M4 Pro runs 13B models about 2x faster and is the only base Mac Mini option capable of 70B models (with 64GB upgrade). The base M4 is better for 7B-focused workflows.

Q2Can the Mac Mini M4 run Stable Diffusion?

Yes, but moderately. SD 1.5 at 512×512 runs at 6–10 it/s. SDXL is slow (20–40 seconds per image). For serious image generation, the M4 Pro or a discrete GPU like the RTX 4070 Super is significantly better. The M4 base is adequate for occasional use but not production workflows.

Q3How fast does the Mac Mini M4 run 7B models?

With Ollama and the Metal backend, the Mac Mini M4 runs Llama 3.2 3B at approximately 80–100 tokens/second and Llama 3.1 8B at 40–50 tokens/second. Phi-3 Mini (3.8B) runs at 90+ t/s. These speeds are interactive and real-time — you'll see text streaming as fast as you can read. For context, a Windows mini PC at the same price runs 7B at 10–16 t/s via CPU.

Q4Should I buy the Mac Mini M4 16GB or 24GB?

For AI use, the 24GB upgrade is strongly recommended if budget allows. With 16GB, you can run 7B models comfortably but 13B models leave only ~6GB for the OS — tight and sometimes unstable. The 24GB version comfortably fits a 13B Q4 model (~8GB) plus 8GB for macOS, giving you full use of the model range. If you plan to run only 7B models, 16GB is sufficient.

Q5How does the Mac Mini M4 compare to the GEEKOM A6 for local AI?

The Mac Mini M4 is significantly faster for inference: ~42 t/s vs ~16 t/s for 7B models — roughly 2.5× faster. The GEEKOM A6 has more RAM (32GB DDR5) and can attempt 32B models via CPU, while the M4 tops out at 32GB. The Mac Mini M4 wins on speed, noise, and plug-and-play setup. The GEEKOM A6 wins on raw memory capacity and has a USB4 port for an eGPU upgrade. For most users, the Mac Mini M4 is the better daily driver.

Q6Can I use the Mac Mini M4 as a 24/7 AI server?

Yes — it's ideal for this. At 20W under load and fanless under light inference, it can run continuously without meaningful electricity cost or noise. Set Ollama to run as a launchd service, expose port 11434 locally or via Tailscale, and any device on your network can query it. The M4's reliability and zero fan noise make it one of the best always-on local AI setups available.

Q7What's the maximum model size the Mac Mini M4 can run?

With 16GB unified memory: up to 13B Q4 (requires ~8GB, leaving ~6GB for OS — tight). With 24GB: up to 13B Q8 (~13GB) or 14B Q4 (~9GB) comfortably. Models larger than 13B will require disk offloading, which drops performance dramatically. If you need 34B or 70B models, the Mac Mini M4 Pro with 64GB is the appropriate upgrade path within the Mac Mini lineup.

Q8Does the Mac Mini M4 support Whisper audio transcription?

Yes. Whisper.cpp with Metal support runs on the M4, transcribing audio at faster-than-realtime for most inputs. The 10-core GPU handles Whisper small and medium models efficiently. For large-v3 (the most accurate Whisper model), expect 1–2× real-time speed — slower but still practical for batch transcription tasks.

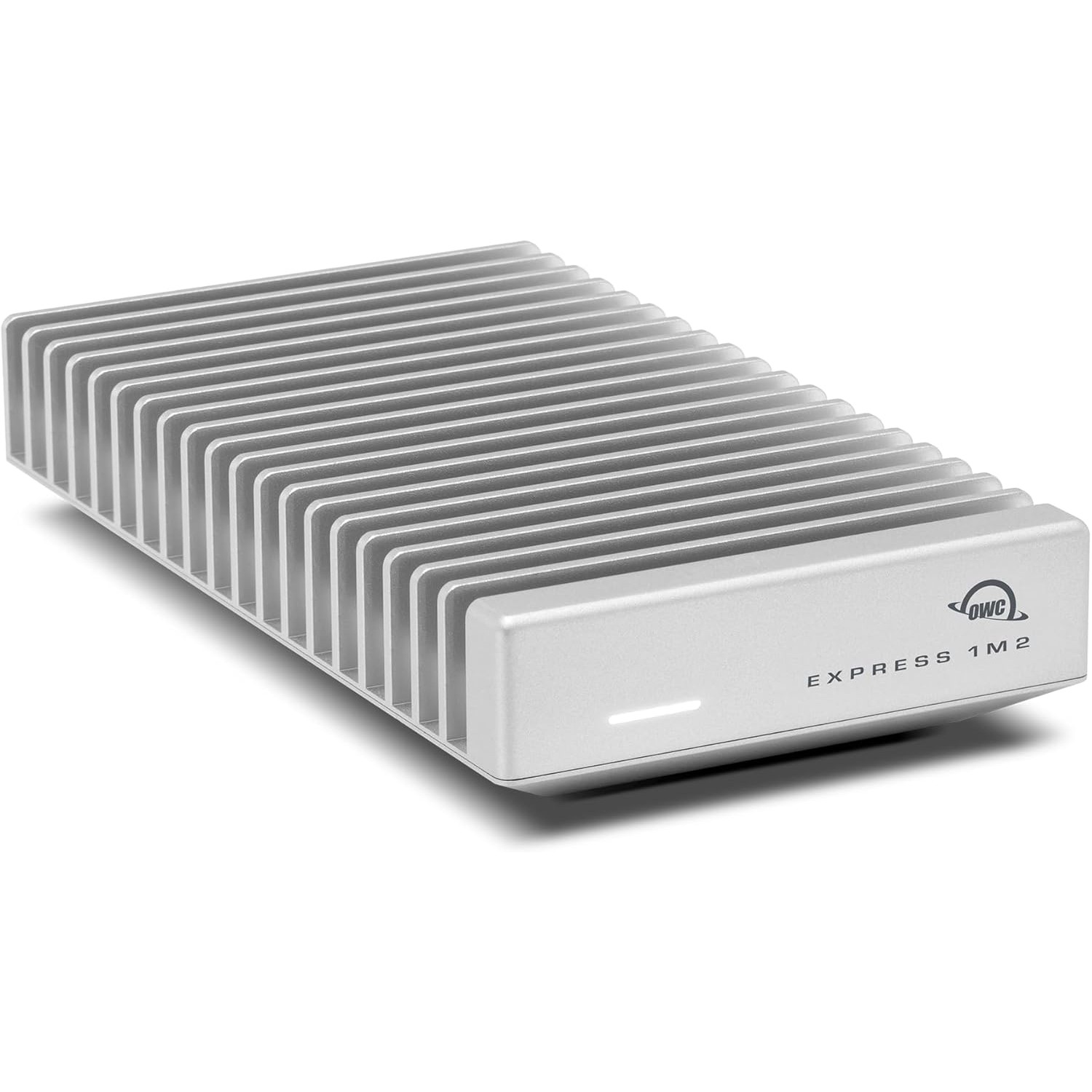

Don't Bottleneck Your Rig

Accessories that unlock this hardware's full potential

Setup Guides

Step-by-step instructions for this hardware

Also Featured In

Compare With

As an Amazon Associate I earn from qualifying purchases.

Apple Mac Mini (M4, 2024)

Check Price on Amazon