MSI GeForce RTX 5080 16G Gaming Trio OC

The MSI RTX 5080 Gaming Trio OC is NVIDIA's near-flagship Blackwell GPU, delivering 960 GB/s GDDR7 bandwidth with 16GB VRAM — fast enough to run 13B models at full Q8 precision and generate FLUX.1 images in under 2 seconds. The TRI FROZR 4 cooler keeps thermals flat during sustained AI inference without the size or power draw of the RTX 5090.

VRAM

16 GB

BANDWIDTH

960 GB/s

TDP

360W

MAX MODEL

13B (full Q8)

MSI RTX 5080 Gaming Trio OC: 155 Tokens Per Second and 13B Q8 in VRAM

What Can You Run on This?

- Local LLM inference at full precision — 7B FP16, 13B Q8 fully in VRAM

- FLUX.1 dev and SDXL image generation at under 2 seconds per image

- ComfyUI multi-model workflows with ControlNet and LoRA stacking

- Whisper large-v3 audio transcription at real-time speeds

- CUDA-accelerated AI development and PyTorch training

Full Specifications

| Chip / Processor | NVIDIA GeForce RTX 5080 (Blackwell, GB102) |

|---|---|

| GPU Cores | 10752 |

| VRAM?VRAMVideo RAM — dedicated memory on a GPU. Determines the maximum model size you can run with full GPU acceleration. Once a model exceeds VRAM, it spills to system RAM over the slow PCIe bus. | 16 GB |

| Memory Bandwidth?Memory BandwidthHow fast data moves between memory and the processor, measured in GB/s. Tokens per second scales nearly linearly with bandwidth — this is the single most important GPU spec for LLM speed. | 960 GB/s |

| TDP (Power Draw)?TDP (Power Draw)Thermal Design Power in watts — the maximum sustained power draw. Higher TDP generally means more performance but more heat and electricity cost. Important for 24/7 always-on setups. | 360W |

| Max LLM Size?Max LLM SizeThe largest language model this hardware can run with full GPU/unified-memory acceleration, at the specified quantization. Larger models require more memory. | 13B (full Q8) |

| Form Factor | GPU |

| AI Performance Benchmarks | |

| Tokens Per Second (7B) | 155 t/s |

| Tokens Per Second (13B) | 90 t/s |

| SDXL Generation Time | 1.6s |

Pros & Cons

Pros

- 960 GB/s GDDR7 — 43% more bandwidth than RTX 5070, tokens/second scales directly

- 16GB VRAM — runs 13B at full Q8 precision, no quantization compromise needed

- Blackwell 5th-Gen Tensor Cores — meaningfully faster per watt than Ada Lovelace

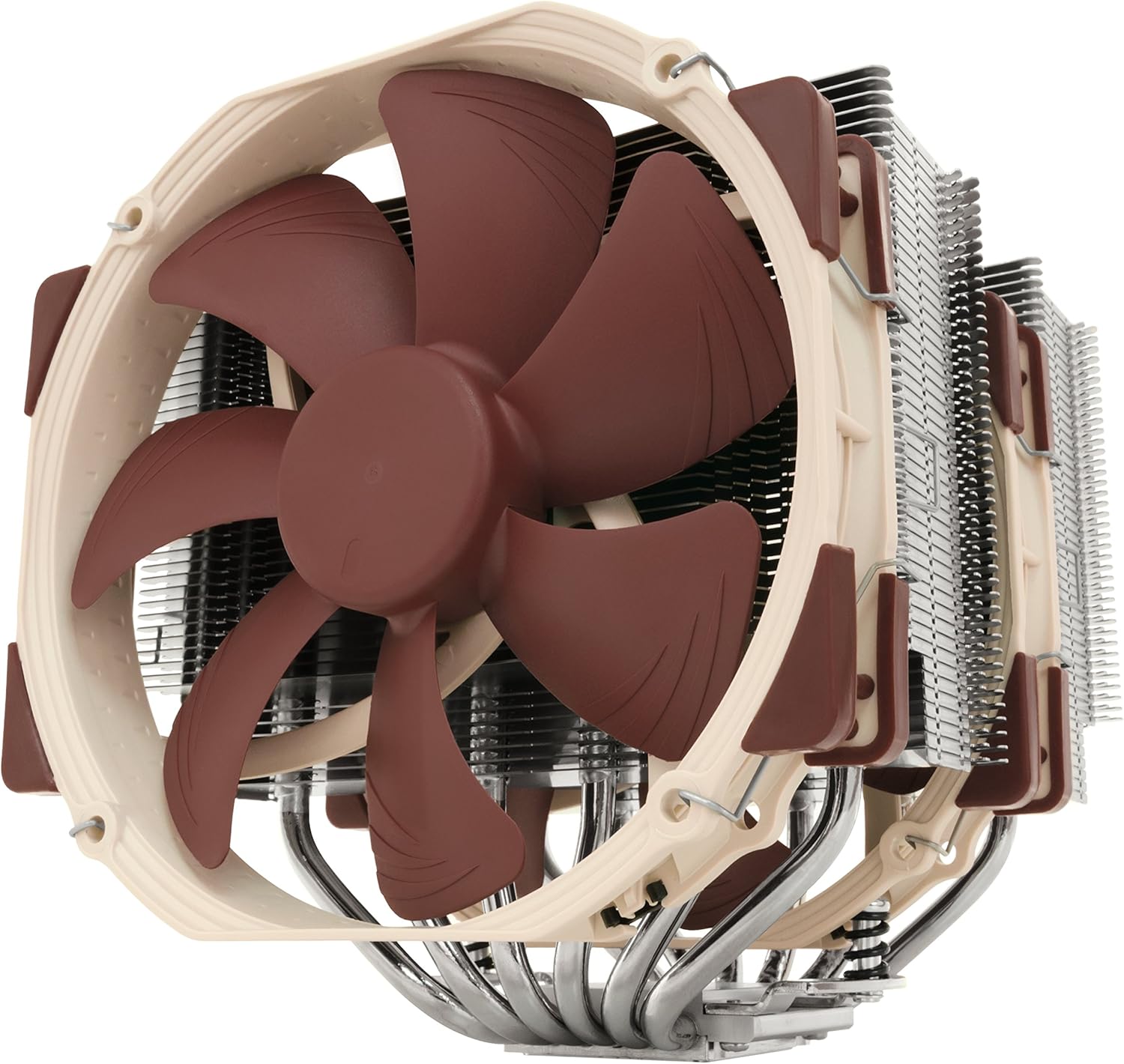

- TRI FROZR 4 cooling — 3 large fans keep thermals stable under 24/7 inference load

- PCIe 5.0 interface — future-proof for AI data throughput from NVMe storage

- HDMI 2.1b + DisplayPort 2.1b — 8K display support

Cons

- 360W TDP — requires 850W+ PSU and strong case airflow

- 16GB VRAM still caps out at 32B Q4 partially — 70B requires CPU offload

- Premium price over RTX 5070 — justify only if 13B Q8 precision matters

- Full-size triple-slot cooler — won't fit compact ITX cases

Who Should NOT Buy This

Honest assessment

- Budget buyers — the RTX 5070 costs significantly less for 70% of the performance

- 70B model users — 16GB VRAM still requires CPU offload for 70B Q4 (~40GB)

- Mini PC or compact build users — 360W TDP needs full ATX airflow

- macOS users — NVIDIA requires Windows or Linux

Our Verdict

MSI GeForce RTX 5080 16G Gaming Trio OC

The MSI RTX 5080 Gaming Trio OC is the sweet spot between price and performance for serious local AI builders who've outgrown 12GB cards. The 16GB GDDR7 at 960 GB/s enables 13B models at full Q8 precision — a meaningful quality jump over the Q4 quantization required on 12GB cards — while FLUX.1 image generation is nearly twice as fast as the RTX 5070. If the RTX 5090's $2000+ price is hard to justify, the RTX 5080 is where most professional local AI workflows top out.

Frequently Asked Questions

Q1What LLM models can the RTX 5080 16GB run fully in VRAM?

With 16GB VRAM, the RTX 5080 runs 7B models at full FP16 precision (~14GB), 13B models at Q8 quantization (~14GB), and 13B at Q4 (~8GB) with plenty of headroom. 32B Q4 models (~20GB) require slight CPU offload. 70B models need substantial CPU RAM offloading and will be much slower.

Q2How much faster is the RTX 5080 vs the RTX 5070 for LLMs?

Approximately 30–40% faster. The RTX 5080 has 960 GB/s vs 672 GB/s on the RTX 5070 — bandwidth is the primary determinant of tokens/second for quantized LLM inference. Expect ~155 t/s on Llama 3.1 8B vs ~118 t/s on the RTX 5070. For 13B models, the RTX 5080 also benefits from running at higher precision (Q8 vs Q4), improving output quality.

Q3Does the RTX 5080 work with Ollama and LM Studio?

Yes. Both Ollama and LM Studio support NVIDIA CUDA out of the box on Windows and Linux. The RTX 5080 is detected automatically, and models that fit in 16GB VRAM are fully GPU-accelerated. No additional configuration is required beyond installing the NVIDIA driver.

Q4What PSU is needed for the RTX 5080?

NVIDIA recommends an 850W PSU minimum for RTX 5080 systems. For AI inference workloads that sustain GPU load for extended periods, 1000W is a more comfortable margin — especially if paired with a power-hungry CPU like an Intel Core i9 or AMD Ryzen 9.

Don't Bottleneck Your Rig

Accessories that unlock this hardware's full potential

Compare With

As an Amazon Associate I earn from qualifying purchases.

MSI GeForce RTX 5080 16G Gaming Trio OC

Check Price on Amazon